Site Reliability Engineering (SRE) has evolved from a niche Google practice into a core engineering discipline adopted by startups and enterprises alike. Today, SRE is not just about keeping systems alive—it is about engineering reliability as a feature, balancing speed with stability, and using automation to scale operations intelligently.

In this modern guide, you will learn the top 15 SRE tools used in real-world production environments, how they fit into the SRE workflow, and how to choose the right stack for your organization or career growth.

What Is Site Reliability Engineering (SRE)?

Site Reliability Engineering applies software engineering principles to infrastructure and operations problems. Instead of relying on manual operations, SRE teams build automated systems to ensure:

- High availability

- Low latency

- Predictable releases

- Fast incident recovery

- Strong observability

At the core of SRE lie concepts such as SLIs (Service Level Indicators), SLOs (Service Level Objectives), and error budgets. Tools are the backbone that make these concepts measurable and actionable.

Core Categories of SRE Tools

Modern SRE tooling generally falls into five categories:

- Monitoring and Observability

- Log Management and Analytics

- Incident Management

- Configuration Management and Automation

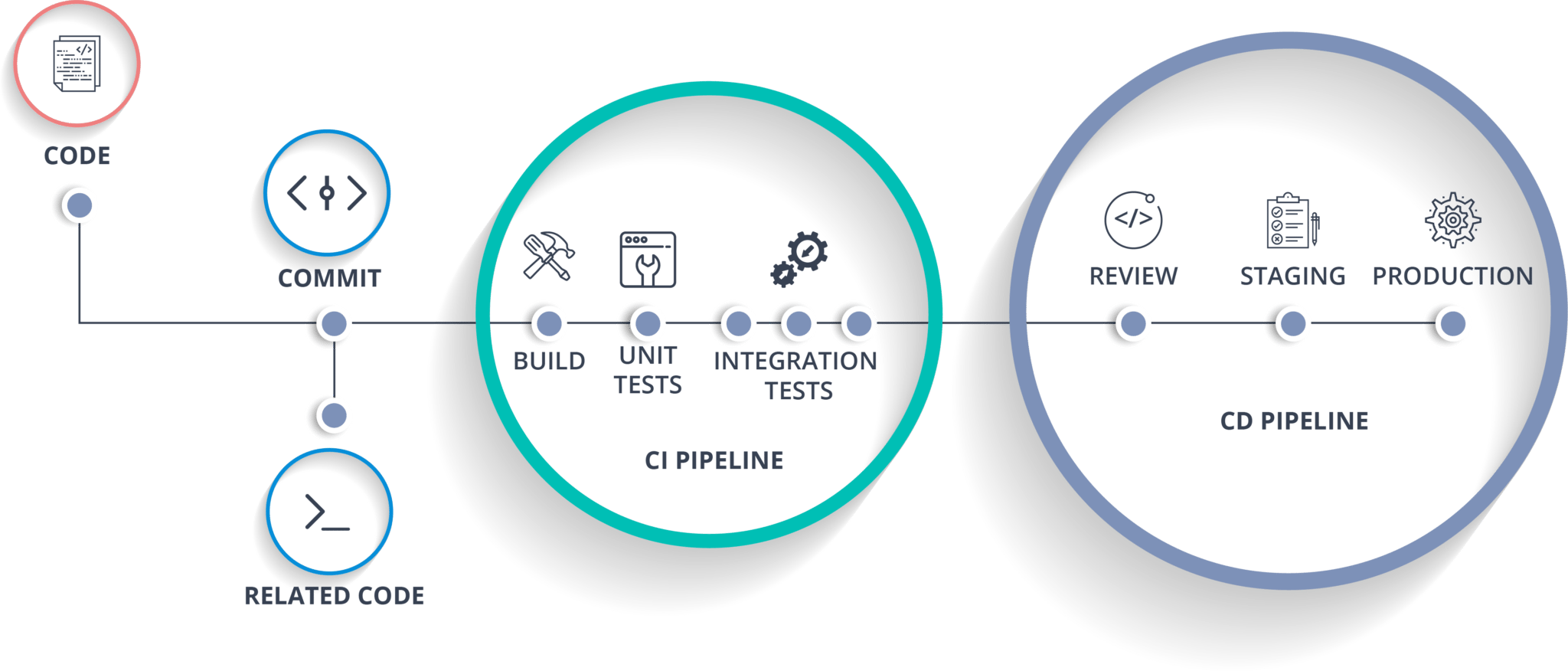

- CI/CD and Reliability Enablement

Let’s explore the most widely used tools in each category.

Monitoring and Observability Tools

1. Prometheus

Prometheus is the de facto standard for metrics monitoring in cloud-native environments. It uses a pull-based model to scrape metrics over HTTP and stores them as time-series data.

Why SREs use Prometheus:

- Powerful query language (PromQL)

- Native Kubernetes integration

- Fine-grained metrics labeling

- Strong alerting with Alertmanager

Prometheus excels at measuring SLIs, making it a foundational SRE tool.

2. Grafana

Grafana transforms raw metrics into clear, actionable dashboards. It integrates seamlessly with Prometheus, Elasticsearch, Loki, cloud providers, and many other data sources.

Key strengths:

- Real-time dashboards

- Custom alerts

- Team collaboration

- Single-pane-of-glass visibility

Grafana is often the visual layer of an SRE observability stack.

3. New Relic

New Relic provides full-stack observability across applications, infrastructure, logs, and user experience.

Best for:

- Application Performance Monitoring (APM)

- Distributed tracing

- Real user monitoring

- Change impact analysis

Its low learning curve makes it popular among teams transitioning into SRE.

4. Datadog

Datadog is an all-in-one observability platform used heavily in SaaS and cloud-first companies.

Why Datadog stands out:

- Automatic anomaly detection

- Infrastructure, APM, logs, and security in one platform

- Watchdog-driven intelligent alerts

- Excellent cloud integrations

Datadog helps SREs detect issues before users feel them.

5. Nagios

Nagios is one of the oldest monitoring tools still widely used today, especially in enterprise and legacy environments.

Strengths:

- Plugin-based architecture

- Host and service monitoring

- Strong community ecosystem

While modern stacks may move beyond Nagios, it remains relevant for traditional infrastructures.

6. AppDynamics

AppDynamics focuses on business-centric application monitoring, correlating performance metrics with real business outcomes.

Key features:

- End-to-end transaction tracing

- Anomaly detection

- Root cause analysis

- SAP and enterprise system monitoring

It is commonly used in large enterprises with complex application landscapes.

Log Management and Analytics Tools

7. Kibana

Kibana is the visualization layer of the Elastic ecosystem, enabling powerful log exploration and analysis.

Why SREs rely on Kibana:

- Fast log searching

- Threat investigation

- Unified observability UI

- Native Elasticsearch integration

Logs become a debugging superpower when paired with Kibana.

8. Splunk

It is an AI-driven observability and security platform widely adopted in mission-critical environments.

Splunk excels at:

- Real-time log analytics

- Predictive alerts

- Security and compliance

- High-volume data ingestion

It is often used where downtime has serious financial or regulatory impact.

9. ELK Stack (Elasticsearch, Logstash, Kibana)

The ELK Stack provides a flexible, open-source solution for collecting, processing, and visualizing logs.

Why ELK is popular:

- Works with any data source

- Highly customizable dashboards

- Scalable architecture

ELK is ideal for teams that want full control over their observability pipeline.

Incident Management Tools

10. PagerDuty

PagerDuty is a cornerstone of modern incident response.

Core capabilities:

- On-call scheduling

- Intelligent alert routing

- Incident automation

- Post-incident analytics

PagerDuty ensures the right engineer is notified at the right time.

11. Asana

Although primarily a project management tool, Asana is often used by SRE teams for:

- Incident follow-ups

- Reliability initiatives

- Postmortem action tracking

Its automation and AI features improve cross-team coordination.

12. Splunk On-Call (VictorOps)

Splunk On-Call specializes in fast, targeted incident resolution.

Highlights:

- Context-rich alerts

- Escalation policies

- Mobile-first incident handling

It reduces alert fatigue and speeds up Mean Time to Resolution (MTTR).

Configuration Management and Automation Tools

13. Ansible

Ansible simplifies automation using human-readable YAML playbooks.

Used for:

- Configuration management

- Application deployment

- Infrastructure orchestration

Its agentless architecture makes it easy to adopt and scale.

14. Terraform

Terraform is the industry standard for Infrastructure as Code (IaC).

Why SREs depend on Terraform:

- Declarative infrastructure

- Multi-cloud support

- Version-controlled environments

- Policy and access enforcement

Terraform enables reliable, repeatable infrastructure provisioning.

15. Jenkins

Jenkins remains a widely used CI/CD automation tool.

Strengths:

- Extensive plugin ecosystem

- Pipeline automation

- Integration with almost any tool

In SRE workflows, Jenkins supports safe deployments and reliability testing.

Key Features to Look for in SRE Tools

When selecting SRE tools, prioritize:

- Automation and self-healing

- Seamless integrations

- Scalability and performance

- Strong alerting and analytics

- Reasonable learning curve and pricing

The best tools align with your system complexity and team maturity.

SRE Tools vs Traditional DevOps Tools

| SRE Tools | DevOps Tools |

|---|---|

| Focus on reliability | Focus on delivery speed |

| Metrics, SLOs, error budgets | CI/CD and collaboration |

| Failure reduction | Workflow optimization |

SRE complements DevOps by adding engineering rigor to reliability.

Certifications for Aspiring SREs

- SRE Foundation Certification

- SRE Practitioner (DevOps Institute)

- Microsoft Azure DevOps Engineer Expert (AZ-400)

- Certified Reliability Professional (CRP)

- Docker Certified Associate (DCA)

Certifications validate both theoretical knowledge and practical skills.

Final Thoughts

Modern Site Reliability Engineering is impossible without the right tools. However, tools alone do not create reliability—engineering mindset, automation, and continuous learning do.

If you are transitioning into SRE or scaling production systems, mastering these tools will place you on a strong career trajectory in 2026 and beyond.

Want more in-depth SRE, DevOps, and cloud-native guides? Follow InsightClouds for practical, production-ready engineering content.

Next Steps :

Devops tutorial :https://www.youtube.com/embed/6pdCcXEh-kw?si=c-aaCzvTeD2mH3Gv

Follow our DevOps tutorials

Explore more DevOps engineer career guides

Subscribe to InsightClouds for weekly updates